Celestica: The Most Important AI Stock You’re Probably Not Aware Of

My deep dive into how Celestica (CLS) evolved from EMS afterthought to Big Tech’s AI infrastructure partner—riding a hyperscaler capex boom with sticky margins and custom rack wins.

It was a summer night, and my banker called to invite me to a Blue Jays game. I didn’t want to go—I knew the routine. She thought taking me out might improve her chances of financing the debt for the company I was acquiring1. Yes, there’d be a nice booth, good food, and networking opportunities, but I wasn’t in a schmoozing mood. My wife convinced me to get out and have some fun—wives are never wrong—so I listened and went.

I’ve been in a booth at the Rogers Centre many times, even back before Rogers bought it in 2005—when it was still called the SkyDome. As expected, the booth had around ten people: the banker, her analyst, and a mix of investors. After brief introductions, subgroups quickly formed. One was up front taking selfies for Instagram, another was lining up for pizza, and one group was holding beers and talking business. Somehow, I ended up in the third group. But what I really wanted was a slice of Canadian (pepperoni, crumbled bacon, and mushroom—best pizza ever, especially with a creamy garlic dip). I scanned for a tactical exit, but it was tight quarters. And then the topic turned to stocks… and all I wanted was pizza.

Everyone was trying to flex their stock-picking smarts. It felt like teenagers around a bonfire passing the joint to the left—except here, they were passing around stock tips instead.

I knew exactly what was coming, so I started mentally flipping through my holdings to see if anything was interesting enough to share...

…times up.

Then it happened—the joint landed in my lap. Maybe two seconds passed, but it felt like an eternity.

…chirp…

…chirp…

…chirp…

“George, just pick something—any stock—they’re all boring anyway,” I told myself.

I tried to sound confident. “Celestica,” I said.

Silence. One guy looked at another, puzzled. “Celestica? The electronics company?!”

“Yeah, the one on Yonge Street.”

More silence…

…

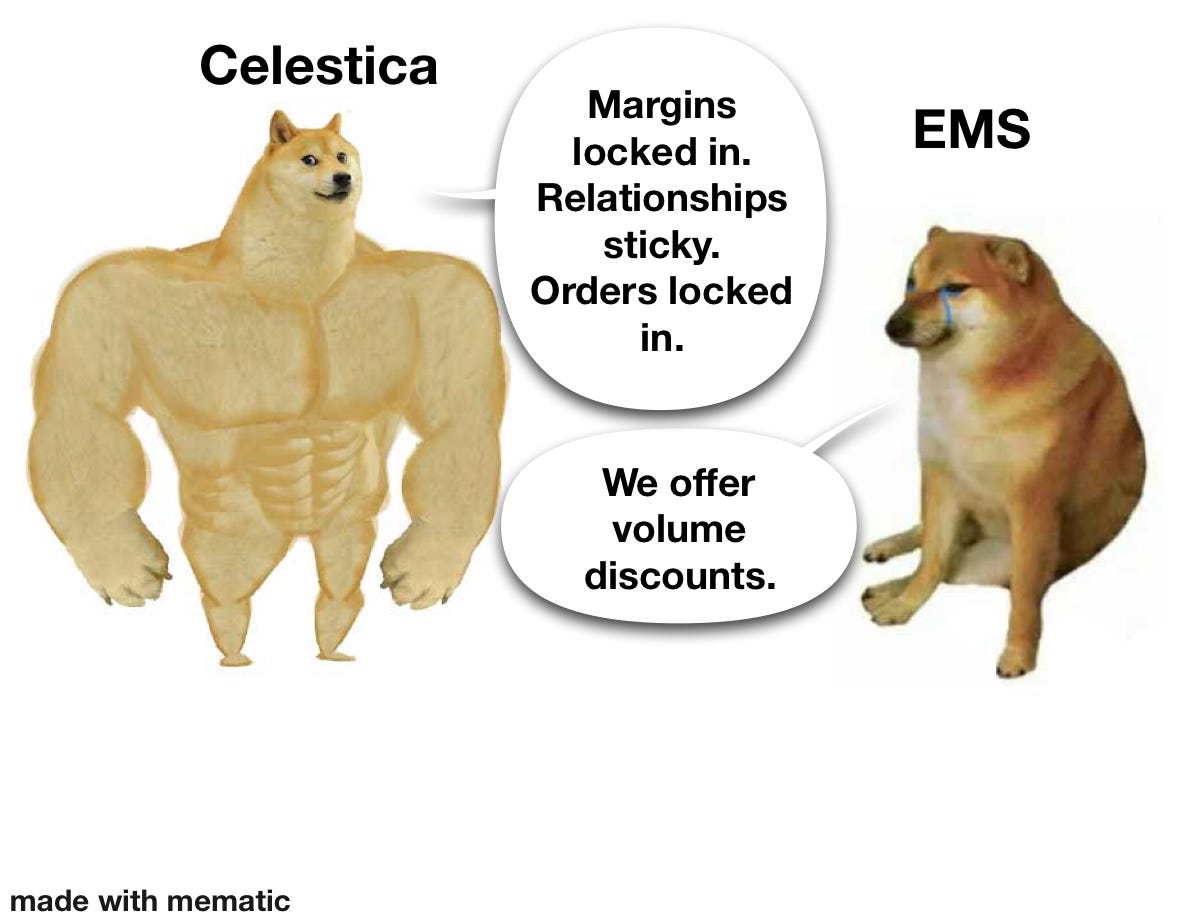

Then the hedge fund guy smiled. “Really? That company sucks—single-digit margins, commoditized business, no edge. I hope you don’t have much riding on it.”

I wanted to kill the convo and get my slice. “Nah, just a starter position. Now, if you’ll excuse me, I’ve gotta hit the washroom.”

Two lies right there. I didn’t go to the washroom—and Celestica (CLS) was 4% of my portfolio. Top 10 holding.

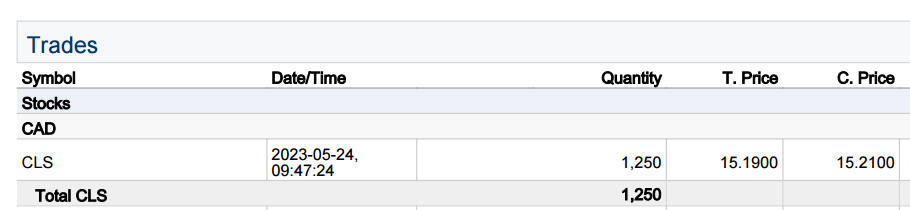

That was August 2023. CLS was trading at $30 a pop. (Canadian dollars).

I’d been digging into the company for months and pulled the trigger in May. By August, the stock had nearly doubled. I was tempted to cash out—but I knew there was more runway ahead.

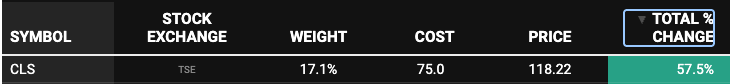

When I launched this newsletter2, CLS was one of the first positions I added to Portfolio Canada.

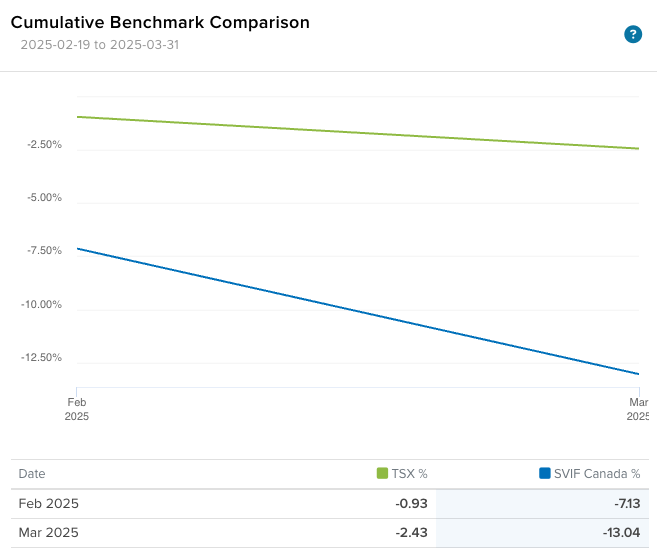

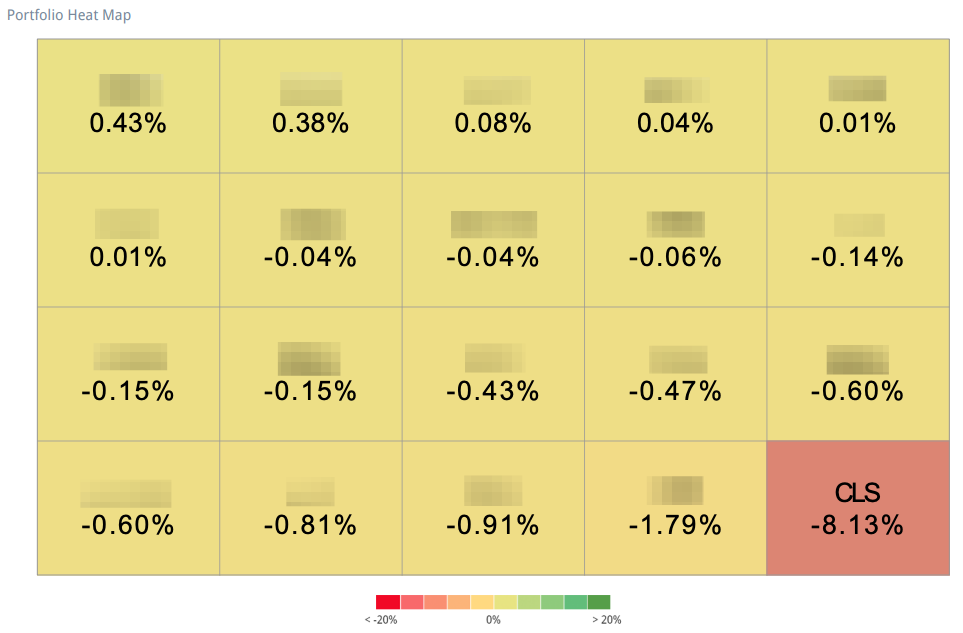

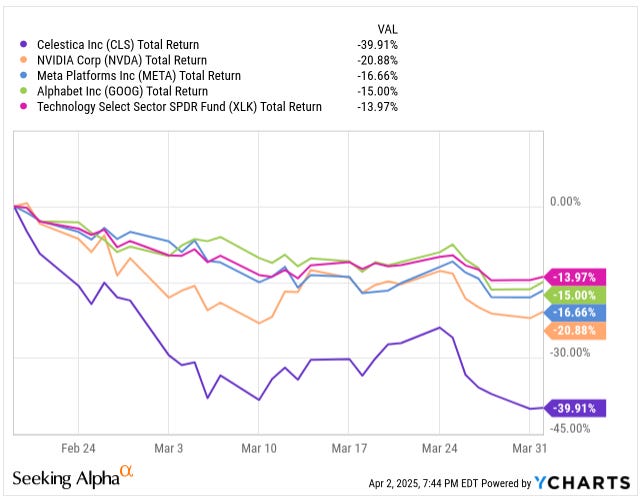

Since February 19, Portfolio Canada is down 13.04% versus just a 2.43% dip for the TSX.

The good/bad news? CLS explains 62% of that performance.

The entire sector’s been taking a beating lately—thanks in part to the DeepSeek drama, rising trade tariffs, and ongoing supply chain headaches. Then there’s the softening in consumer electronics demand, which isn’t doing anyone any favors.

Quick recap if you missed it: DeepSeek, a new Chinese open-source LLM, came out swinging, boasting ChatGPT-4 level performance. That spooked investors who suddenly saw a path for cheaper, commoditized AI models—undercutting the moat of expensive proprietary models and, by extension, the infrastructure arms race tied to them. If hyperscalers can get similar performance at a fraction of the training cost, maybe they don’t need to blow billions on custom racks and super-charged data centers every quarter. That was the fear, anyway.

Add on tariffs—which hit CLS hard since roughly 70% of their supply chain is in Asia—and you get the perfect storm. These new US trade restrictions have raised red flags about cost inflation, logistical snags, and the potential need to reconfigure global operations just to stay compliant. All that means margin pressure.

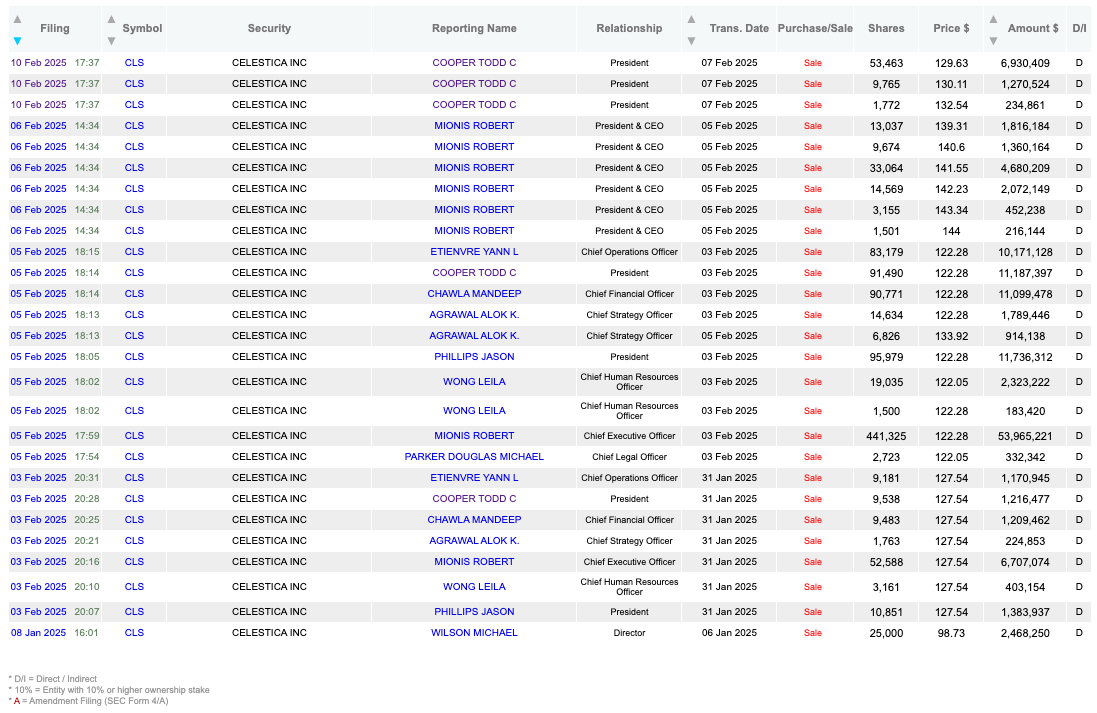

And yet, CLS has dropped more than the rest of the pack. Why? I think the market’s reacting—perhaps overreacting—to the sheer size of insider selling. Since the start of 2025, insiders have dumped $137 million worth of stock.

That kind of volume gets noticed. Maybe they know something we don’t. Or maybe they’re just de-risking in light of the macro headwinds and supply chain fragility. Either way, the optics aren’t great. When execs cash out like that, it shakes confidence—even if the fundamentals remain intact.

In case you’d rather not wade through 6,000 words of me rambling about Celestica, here’s a table of contents so you can jump straight to the good stuff:

The Backbone of AI: Celestica’s Role in the 400G/800G Data Center Revolution

Why AI’s Next Boom Isn’t Training—It’s Inference, and Celestica Is Ready

What DeepSeek Really Means for AI Infrastructure—and Celestica’s Upside

Celestica’s Quiet Transformation: From Commodity EMS to Hyperscaler Hero

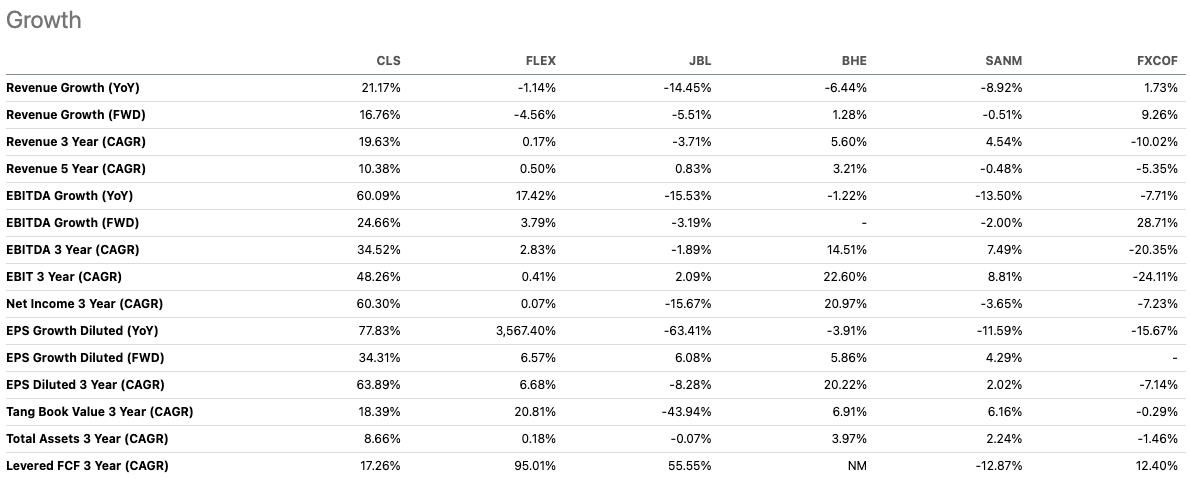

Let’s cut straight to the core: CLS is no longer a plain-vanilla electronics contract manufacturer. For years, they’d been lumped in with all those EMS (electronic manufacturing services) companies that churn out commodity hardware for razor-thin margins. But CLS has quietly transformed its business mix and sharpened its focus on AI, data-center infrastructure, and what folks like to call “hyperscalers” (think Microsoft, Google, Amazon). In the latest quarter, the AI demand wave helped CLS deliver its highest numbers in the company’s history. If you’re looking for a no-frills reason to pay attention, that’s it. But I’m getting ahead of myself—let’s walk through the details.

At around 13x EBITDA, CLS doesn’t seem cheap compared to its average multiple of 7x, but that is due to the re-rating of the stock.

I know, I know—“AI play” is almost meme territory these days, and I have already written about other stocks benefiting from AI, such as POWL and TSMC. But CLS has a real seat at the table. Over the last five years, its Connectivity & Cloud Solutions (CCS) team built out data-center chops, forging relationships with top hyperscalers who are investing heavily, I dare to say “overspending”, on AI compute and storage infrastructure. Data center spending soared as the big guns—Microsoft, Alphabet, Meta—dumped mountains of capital into GPU-heavy servers and networking gear. CLS, which historically had been stuck in low-margin corners of the manufacturing world, found itself a significant supplier for these new AI-centric data centers.

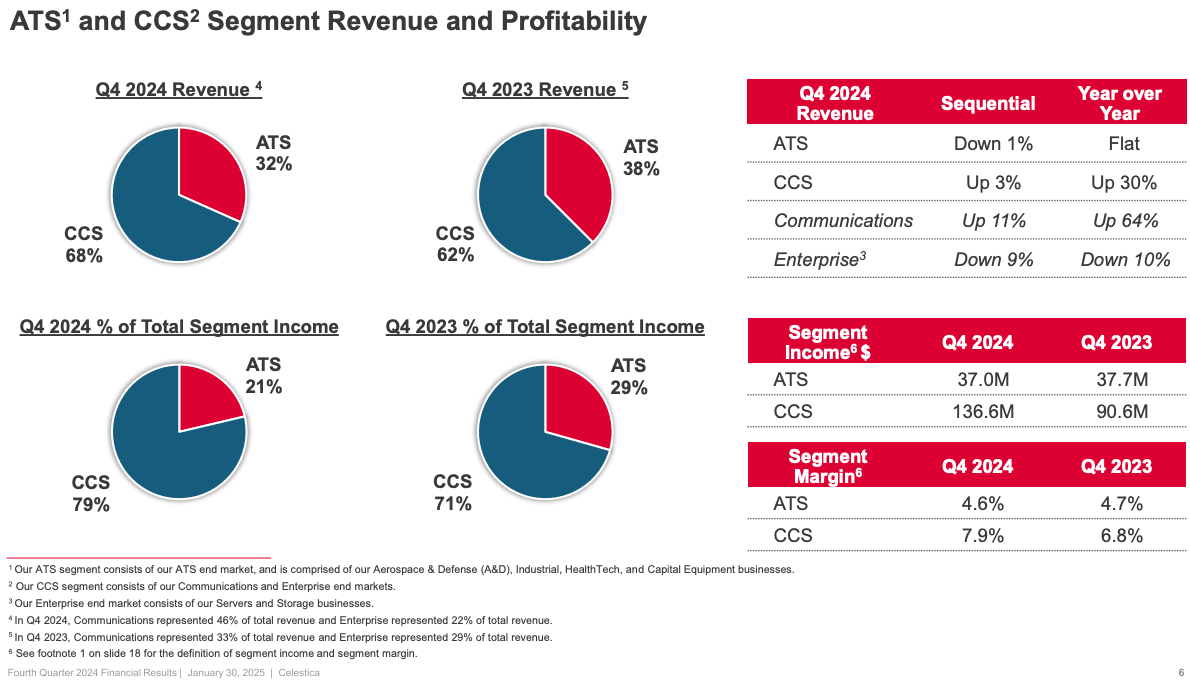

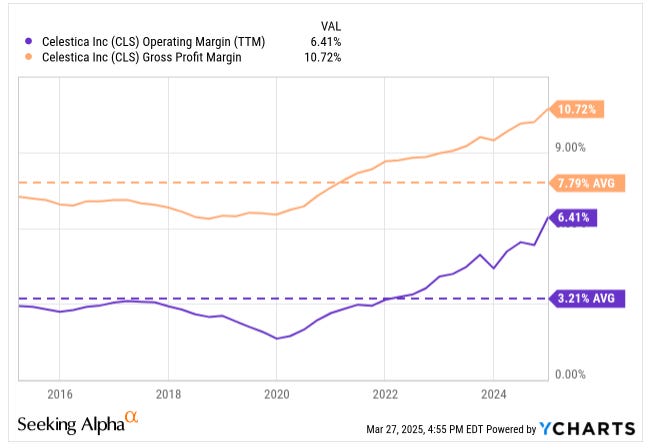

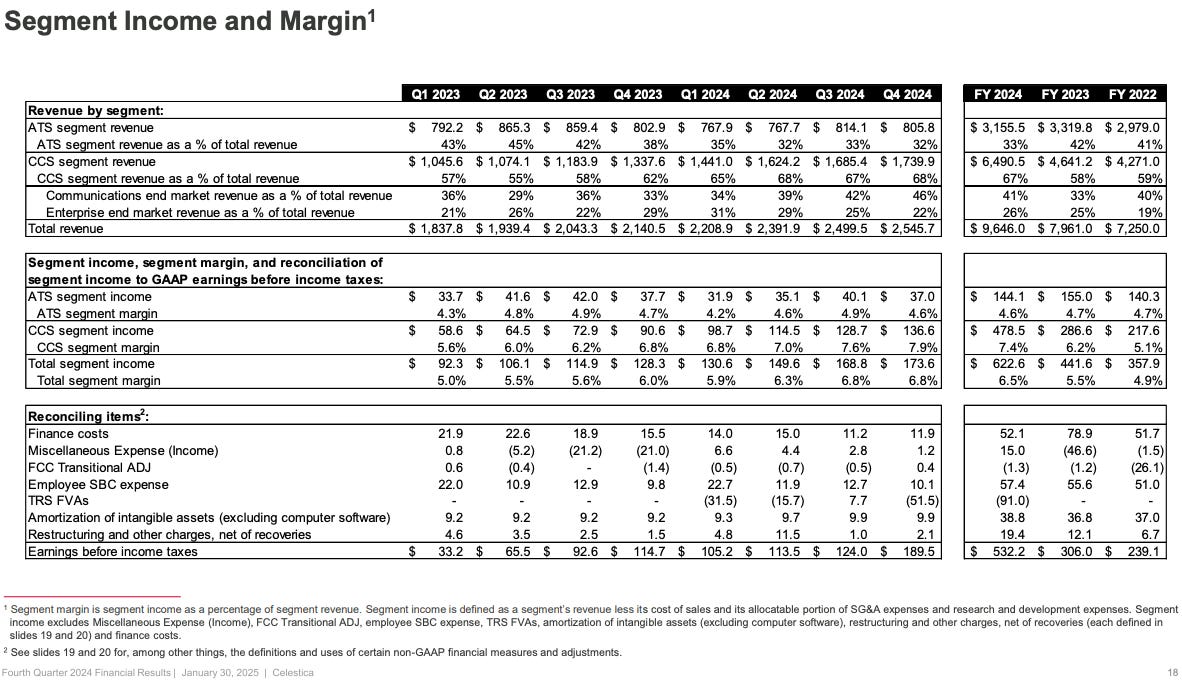

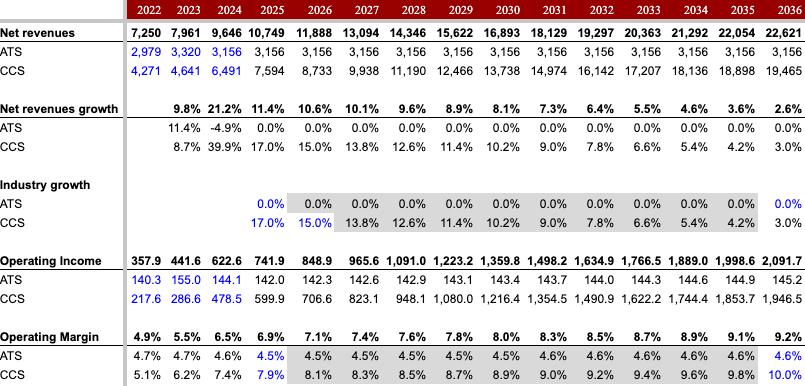

We’re talking real scale here. The CCS segment, which made up 68% of CLS’s total revenue last quarter, is humming along as the major cloud players keep shelling out for new AI supercomputers. Compared to Q4 2023, CCS revenues grew 30% while ATS stayed flat. Not only did CCS revenues grow, but the margins expanded too—from 2.2% in 2017 to 7.9% by 2024—making the business more profitable.

It’s an arms race, and no one wants to let the others get too far ahead, even if they’re blowing billions in the process. It’s a major tailwind for CLS because HPC (high-performance computing) servers and advanced networking gear—400G, 800G—need CLS’s design know-how and manufacturing muscle. Margins on these advanced products are better, too, thanks to all the specialized engineering CLS can bake into them.

And there’s real endurance to the cycle. Sure, some skeptics think AI data-center spend will spike for a year or two and then settle down. But we’re only scratching the surface on “inference” (the real-time usage side of AI models), which could keep this ball rolling far beyond the initial wave of training hardware. Amazon has said that for every $1 spent on training, up to $9 could be spent on inference—so if you’re building out the hardware to handle that throughput, you’re not exactly tapping the brakes anytime soon.

Now, none of this would matter if CLS’s finances were stuck in 2016. But the good news is, margins have been climbing steadily—operating margins are now above 6%, gross margins are in double digits—and there’s a solid case they keep improving.

CLS’s mix shift away from the old, lower-margin programs into advanced tech projects is a big reason for that. Furthermore, CLS’s HPS offerings—a fancy way of describing highly engineered, partly proprietary reference designs—are in demand because they save hyperscalers’ time and money. HPS projects are typically single-sourced, so once CLS is in, it’s very hard to knock them out. That dynamic supports margin expansion and revenue visibility, two things that used to be elusive for them.

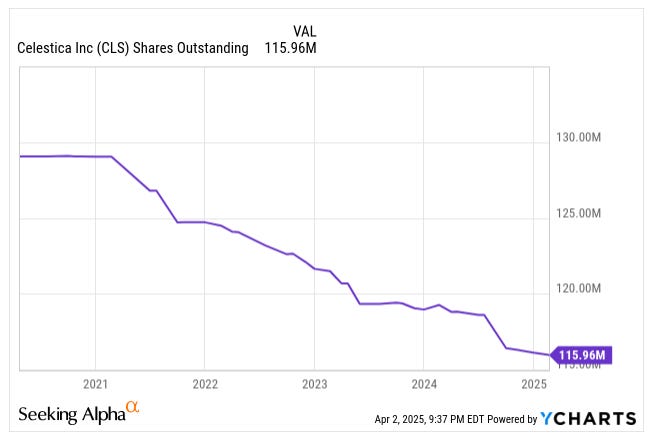

On top of all that, management has been busy on capital returns and smart moves: they’ve shed some of the legacy, lower-margin customers such as Cisco, and they’ve ramped share buybacks.

With net leverage under control and solid free cash flow, CLS is no longer just a “contract manufacturing afterthought.” The big hyperscaler programs are projected to surpass $4.6 billion of annual revenue next year, an enormous chunk of the entire CCS segment. Management’s even guided 10%-plus revenue growth, expecting the AI wave to continue—and that’s without accounting for potential upside from sovereign-level AI build-outs or new enterprise wins. Throw in a potential rebound in their industrial segments (like aerospace), and you’ve got a few nice catalysts.

What I find compelling is the multiple re-rating angle. Historically, EMS companies often sit at single-digit EV/EBITDAs because they’re viewed as cyclical, commoditized, or overshadowed by Apple-supplier mania. But CLS’s pivot towards “AI server in a box” for the biggest tech titans, plus a stickier and more design-intensive business, finally changed that narrative. If margins keep rising and AI spending stays white-hot, shares could hit $153—double the current share price.

CLS was once overlooked. Now? It’s cleaned up and has serious horsepower under the hood. The big question—like with any cyclical tech theme—is whether these massive AI data center spends can sustain momentum. But with a clean balance sheet, better margins, and a buyback lever ready if the stock lags, CLS has plenty of ways to keep us interested.

So that’s the gist: a once-overlooked stock, now rewired for faster growth and fatter margins, thanks to AI-fueled demand for high-performance hardware. They’re deep in with the hyperscalers (on both storage and server gear), and as these AI servers get more complex, CLS’s margin story just keeps getting better. Is there risk? Sure—if AI capex cools or CLS loses a top cloud customer. But that’s the ante in this game, and the risk/reward still looks favorable at these levels.

This is the brief thesis. If you want more detail, go grab your scuba gear because we are going Titanic deep on this one.

Breaking Down Celestica’s Revenue Engine: The AI Rack Builders Behind Big Tech

CLS originally started out as an internal IBM manufacturing arm—basically IBM’s in-house factory—building electronics for them for over 75 years. In 1993, CLS started offering services to outside customers, and by 1996, a group led by Onex Corporation acquired the business from IBM. CLS went public in 1998, and for a long time, Onex remained the controlling shareholder. That finally changed in 2023 when Onex offloaded its stake through a couple of secondary offerings totaling 18.8 million shares. Alongside that, all of Celestica’s multiple voting shares (MVS) were converted into regular common shares, effectively ending Onex’s control. So now, for the first time as a public company, CLS is fully independent—no dual-class structure, no dominant shareholder. Clean slate.

Reading the 10-K filings—or even CLS’s website—can get overwhelming, so let’s simplify. Unlike TSMC, which sells one main product (semiconductors), CLS sells a range of products to a mix of customers. A simple way to think about CLS: They help big companies design, build, and manage the hardware behind today’s tech products.

CLS is the behind-the-scenes tech contractor for big companies.

Or think of them as the smart hands backstage—the crew that builds the stage, the sound system, and the lights for a massive concert. The band—Amazon, Meta, Google—gets all the glory, but without CLS, the show doesn’t happen.

Specifically, CLS makes boring but critical hardware. They produce the servers and storage racks for data centers, networking gear that helps your internet work faster, and electronics for aerospace, healthcare and factories.

CLS divides its business into two main segments: CCS and ATS.

CCS (Connectivity & Cloud Solutions) is the tech-heavy side of the business. It focuses on:

Enterprise Servers & Storage: Big iron that processes and stores data—the brains behind a cloud platform.

Networking Equipment: Switches and routers that manage internet traffic, especially the cutting-edge 400G and 800G tech.

AI/ML Hardware: The real growth engine—building the hardware that powers training and inference for AI models.

For years, revenues in CCS were pretty flat, and margins hovered in the low single digits. In Q4 2017, CCS pulled in $1.06 billion with just a 2.2% operating margin. By early 2023, quarterly revenue was still near $1 billion, but margins had improved to 4.3%. That margin lift came from a better business mix, shedding low-margin contracts, and tightening operations.

CLS started leaning into higher-margin, engineering-heavy work. Under HPS, it helps design and build specialized hardware—think data center gear and high-performance computing—not just cranking out generic boxes. This work is more complex, higher value-add, and naturally carries better margins. HPS has grown into a larger slice of CCS.

CLS also walked away from low-margin, commodity-type work—like basic PCBs and legacy telecom gear. CLS once had a big relationship with Cisco, even winning awards like the “Excellence in Partner IT Collaboration” in 2010 and “EMS Partner of the Year” in 2015. Cisco designed the switches and networking gear; CLS built them. But by Q4 2020, the two had fully parted ways.

CLS also went hard on cost controls—lean manufacturing, smarter sourcing, and factory optimization all helped juice margins.

Starting in 2023, CCS finally broke out—revenues took off, and margins followed. By December 2024, quarterly revenue had jumped 66%, and margins nearly doubled to 7.9%.

That surge? It’s hyperscalers dialing up CLS for HPS projects. These are custom-built solutions that are sticky by design, making it tough to swap out CLS once they’re embedded. (More on this in the next section.)

ATS (Advanced Technology Solutions) is CLS’s more industrial arm. It’s smaller—about 32% of total revenue—but adds much-needed diversification beyond the boom-and-bust cycles of tech.

ATS focuses on four key areas:

Aerospace & Defense: Components and systems used in planes, satellites, and military gear.

Health-Tech: Electronics for imaging systems, diagnostics, and surgical tools.

Industrial & Energy: Hardware for power tools, renewable energy setups, and factory automation.

Capital Equipment: The machines behind the machines—used to build semiconductors, displays, and other tech essentials.

This side of the business comes with a few perks:

Longer product lifecycles (we’re talking years, not quarters)

Stickier customer relationships—once you’re in, you tend to stay in

Higher-value services like design tweaks, testing, and ongoing support

So even though ATS doesn’t grow as fast as CCS, it’s steady, predictable, and a solid ballast for the more volatile tech business.

Why Celestica Wins: Sticky Clients, Smarter Designs, and a Global Edge

CLS’s edge over traditional EMS players? They’re not just wrench-turners—they’re full-blown technology partners. While most EMS firms are happy to assemble whatever blueprints they’re handed, CLS stands out with its HPS. That offering lets them co-design and build the ultra-specialized, high-stakes stuff—especially in booming areas like AI and next-gen networking. So, instead of just being an EMS player, CLS is more like an EMS/ODM hybrid, with the scale of a manufacturer and the brains of a designer.

Another advantage comes from CLS’s strong relationships with hyperscale tech giants. These aren’t transactional, price-sensitive contracts—they’re long-cycle, high-touch engagements with real technical depth. CLS provides tailored, highly engineered solutions that are often single-sourced, which makes these relationships sticky and defensible. Winning these contracts comes down to technical chops, reliability, and global execution—areas where CLS has built real credibility.

Speaking of global execution, CLS also leverages its scale and integrated supply chain to its advantage. The company shares real-time data with its component suppliers and logistics partners, using its size and sophisticated IT systems to lock in better pricing, secure scarce components, and influence packaging and design. Most importantly, CLS acts as the procurement engine for its customers, buying and managing materials on their behalf. This is critical in today’s environment where most electronic components come from Asia, and some key parts only have a single supplier. In a world where one late chip can halt an entire rack shipment, CLS’s ability to keep parts flowing is a competitive edge that’s hard to see on a spreadsheet but invaluable in practice.

So while other firms are just filling orders, CLS is co-architecting the product, managing the supply chain, and delivering the whole thing, end-to-end. That’s the difference.

The Backbone of AI: Celestica’s Role in the 400G/800G Data Center Revolution

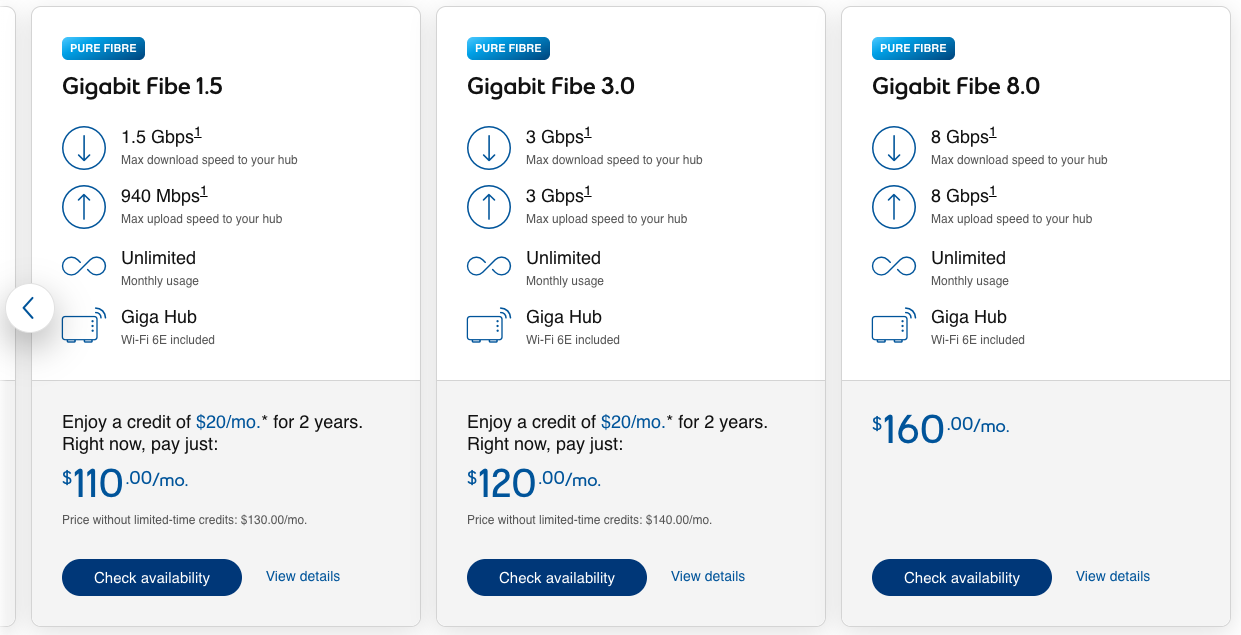

These switches? They’re basically the data highways of the internet. The “G” stands for gigabits per second, so a 400G switch can move 400 gigabits every second. For context, I’m still stuck at 100 Mbps (that’s megabits), and there are home internet plans offering 1.5G, 3G, and even 8G! (Not sure who needs 8G at home, but hey, that’s another rant.)

Data centers need way more speed than whatever we’ve got at home—thanks to the explosion in cloud computing, video streaming, and especially AI. That last one is key. AI and machine learning chew through massive datasets and need that data flying between servers at lightning speed. Slow switches create bottlenecks, so the obvious upgrade path is swapping out old 100G or 200G switches for newer 400G and 800G gear. These upgrades expand bandwidth between GPUs, nodes, and storage, cutting down on latency. In some setups, they’re even deploying optical interconnects inside racks to squeeze out more performance.

But faster switches are just the beginning. Hyperscalers are basically tearing down and rebuilding data centers from scratch—rethinking power, cooling, layout, hardware, networking, all of it. Why? Because today’s AI racks are GPU-packed monsters that need 6 to 10 times more power than a traditional setup. More power means more heat, and more heat means serious cooling. You can’t just slap a fan on these things and call it a day.

So, what do they do? They’re using pipes and cold plates on the chips (liquid cooling), dunking entire servers into special coolants (immersion cooling), and slapping liquid-cooled panels on the backs of racks (rear-door heat exchangers). All of this lets them cram more AI firepower into tighter spaces. And they’re not just stacking servers—they’re building custom, all-in-one “integrated rack solutions” that combine compute, storage, networking, and power into one dense, high-performance unit. This is exactly where CLS comes in—co-designing and manufacturing those racks.

$1 Trillion and Climbing: Hyperscaler AI Spend and What It Means for Celestica

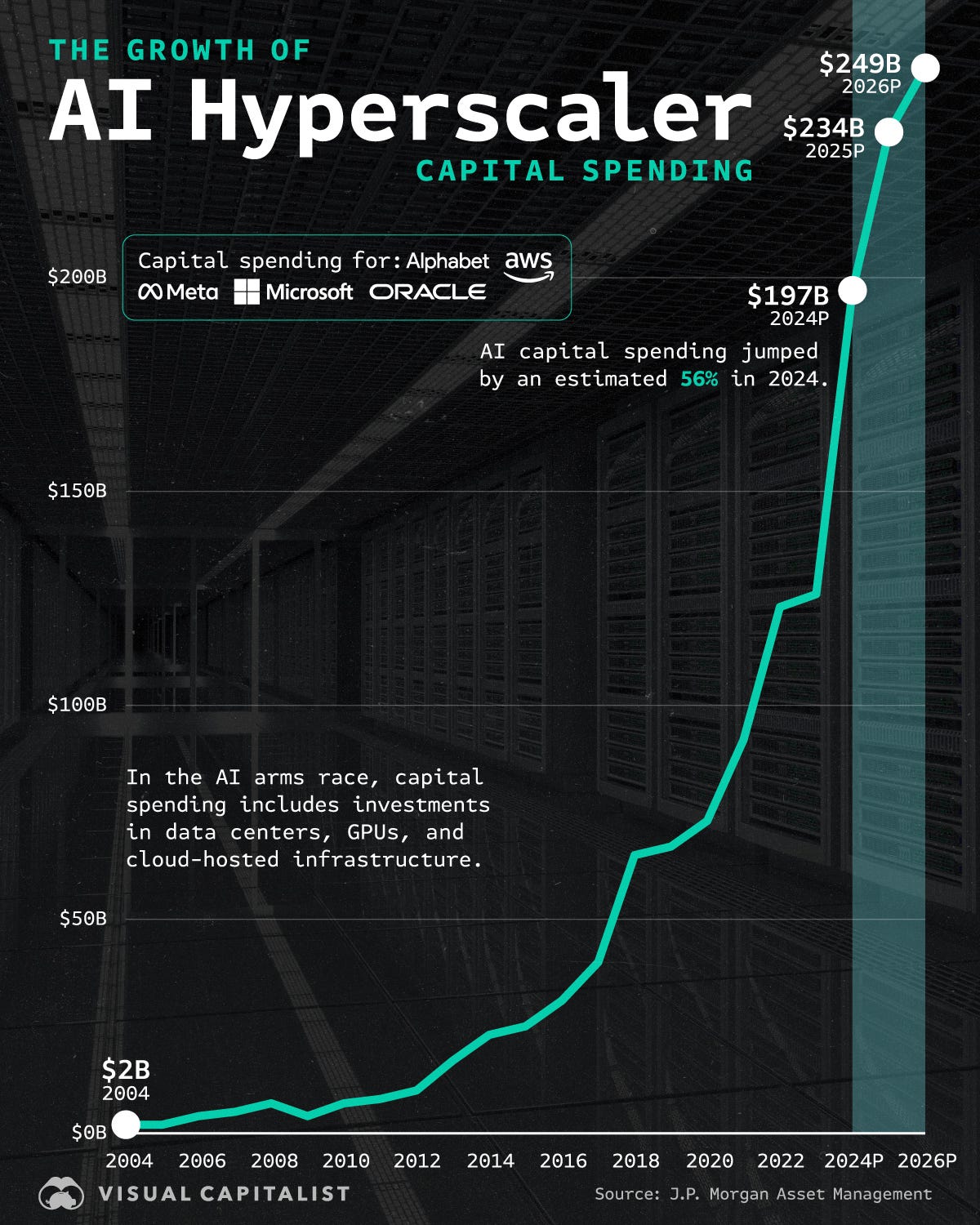

Back in 2004, global investment in AI barely scratched $2 billion. Fast forward to 2024, and it’s exploded to $197 billion. Shoutout to Visual Capitalist for the killer chart that captures this rocket ship trajectory.

And guess what? That number is only going up—Microsoft alone is projected to spend $80 billion in 2025 just on data centers!

Amazon is leading the pack, dropping around $75 billion in 2024, largely to supercharge its cloud service, AWS. Meta, meanwhile, is making a serious play in AI, investing $40 billion and snapping up 350,000 GPUs from Nvidia for its large language models. Google Cloud isn’t sitting idle either—it’s already seeing massive AI-driven revenue gains, with over two million software developers tapping into its platform.

A major challenge looming ahead? Keeping up with demand. AI requires a ton of storage and computing power, and as these companies race to expand, data center capacity is struggling to catch up. To put it in perspective, US data center property inventory grew by a staggering 43% annually in 2023 and 2024, while apartment inventory in the country barely budged at 2% over the same period.

So, in a nutshell, AI is fueling an investment frenzy, and the infrastructure needed to power it is growing at an incredible pace.

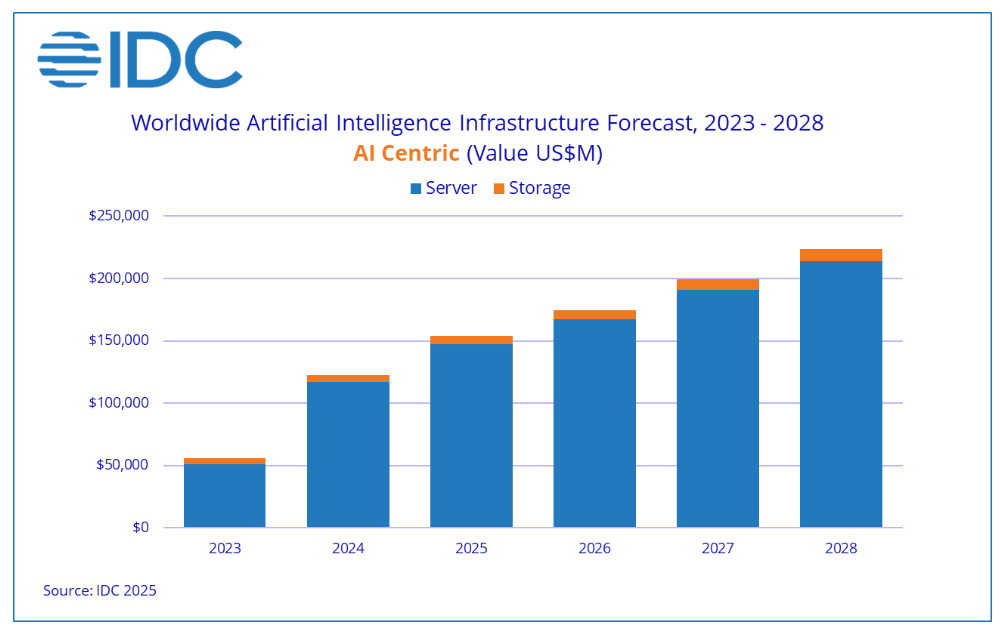

Looking ahead, the trajectory of AI infrastructure spending is poised for continued growth. The IDC projects that global AI infrastructure investments will surpass $200 billion by 2028, reflecting sustained double-digit annual growth since 2019. This projection aligns with Gartner's forecast, which anticipates that by 2028, hyperscalers will operate $1 trillion worth of AI-optimized servers.

Despite the excitement, there are challenges ahead. Energy consumption is becoming a major concern, pushing companies to optimize architectures and minimize power use. Innovations in accelerated computing, AI model training efficiency, and next-gen network infrastructure will be crucial in making AI growth sustainable.

Given these drivers, I think the pace of AI infrastructure investment will keep humming at least through the medium term. The logic is simple: this demand isn’t just hot—it’s structural. As AI seeps deeper into industries like healthcare, finance, logistics, and defense, the need for serious computing firepower just keeps growing.

At the same time, hyperscalers are in an all-out brawl for AI dominance, and infrastructure is where the punches are landing. Owning the best hardware stack means owning tomorrow’s platform layer, so this spend isn’t just about short-term returns—it’s a long-term land grab.

Plus, the tech isn’t standing still. We’re talking bigger models, new use cases, and more data-intensive inference—all of which push computing needs even higher. AI’s not a side hustle anymore—it’s the main act. And the infrastructure arms race? Yeah, it’s just getting started.

Why AI’s Next Boom Isn’t Training—It’s Inference, and Celestica Is Ready

In the realm of AI, two critical processes are training and inference. Training involves teaching an AI model to recognize patterns by exposing it to large datasets. For instance, a model can be trained on thousands of labeled images to identify cats by adjusting its parameters to recognize feline features. This phase is computationally intensive and typically performed in specialized data centers equipped with high-performance hardware.

Once trained, the model enters the inference phase, where it applies its learned knowledge to new, unseen data to make predictions or decisions. Using the earlier example, the trained model can now analyze a new image and determine whether it contains a cat.

Inference is the deployment of the model into real-world applications, processing live data to generate outputs. This phase requires efficient computation to deliver quick and accurate results.

As AI applications become more prevalent across various industries, the demand for inference is escalating. While training is a foundational step, it is typically a one-time or periodic process. Inference, however, occurs continuously as models are deployed to handle real-time data inputs. For example, virtual assistants, recommendation systems, and autonomous vehicles rely on constant inference to function effectively. This ongoing need for inference translates to a sustained and growing demand for AI infrastructure capable of supporting these operations.

The focus of AI infrastructure is shifting toward inference. Currently, a significant portion of AI computational resources is dedicated to training large-scale models. However, as these models are deployed, the emphasis will move toward supporting inference workloads. Projections indicate that by 2030, the demand for inference infrastructure will surpass that of training.

DeepSeek Didn’t Kill the AI Boom—It Might Be the Gasoline

So let’s talk about DeepSeek—the $6 million LLM that had the market clutching its pearls. When the news dropped, headlines screamed that OpenAI and the other AI big dogs had just been undercut by a budget rival from China. Investors panicked. “What if all this AI infrastructure is overbuilt? What if training these monster models was a waste of money?”

But here’s the thing: everyone misunderstood the real takeaway.

DeepSeek didn’t mark the end of the AI infrastructure boom—it might’ve just kicked it into a higher gear.

Why?

Because now the cost to play in the AI space just dropped dramatically, and when something becomes cheaper, adoption skyrockets. It’s basic economics.

Think about what happened with solar panels: Once prices dropped, they weren’t just for eco-nerds and early adopters anymore—everyone started installing them. Households, businesses, factories. The same thing is about to happen with AI. Now that startups and smaller companies can afford LLMs without dropping $500 million on computing, they’ll start integrating AI into everything: customer service, logistics, HR, analytics—you name it.

That means more servers. More memory. More power. More cooling. More demand for the stuff CLS builds.

The beauty of DeepSeek isn’t just cost—it’s scale. These smaller, cheaper models unlock AI for the long tail of the economy. Not everyone needs a 200-billion parameter model trained on a nuclear-powered GPU cluster. Many companies just want something that works reliably at a fraction of the cost. With that accessibility comes a flood of new use cases, from SaaS apps to edge devices.

Also important: DeepSeek isn’t replacing OpenAI. It’s not a zero-sum game. Instead, it expands the total addressable market. ChatGPT remains the go-to for enterprise-grade solutions, while DeepSeek-type models fill in the gaps where it’s overkill or too expensive.

Celestica’s Valuation: Why $153 Is More Than Just a Number

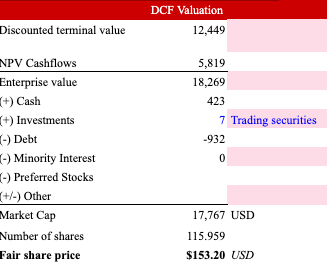

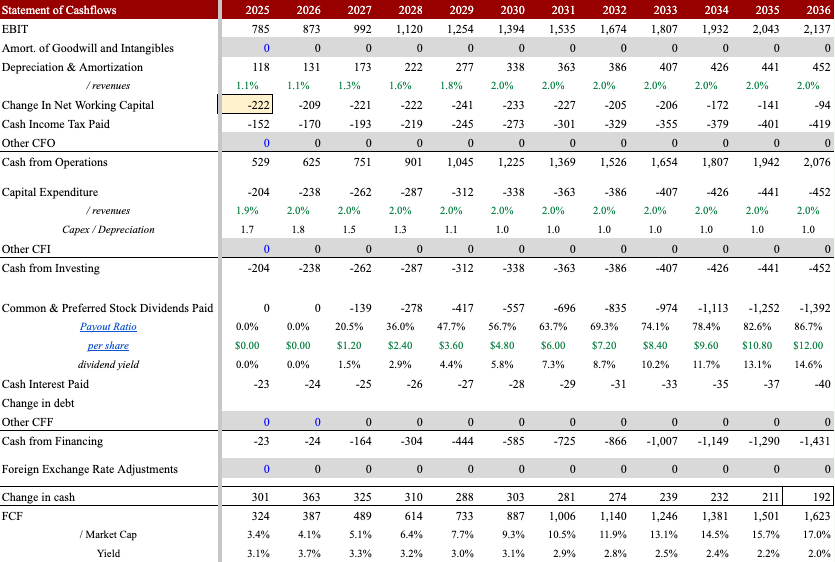

I estimate a fair value of $153 per share for CLS stock, that’s in USD, for the NYSE listing. (If you’re looking at the TSX, just multiply by the USD/CAD exchange rate.) I got there using a DCF model.

My cost of capital sits at 8.7%, based on an unlevered beta of 0.95 (adjusted for cash) and assuming an optimal debt-to-capital mix of 35%. Let’s walk through the assumptions.

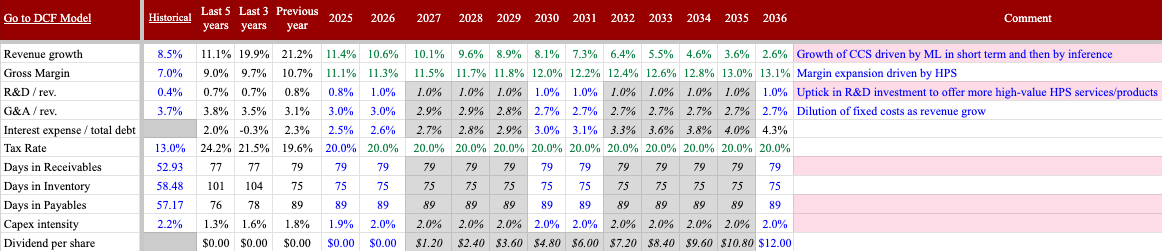

For revenue and margins, I went with a conservative approach. Take ATS—I modeled it with no growth and flat margins. In reality, given the current geopolitical environment, most countries are ramping up defense spending, which could very well boost demand for CLS’s products.

On the CCS side, I assumed decelerating growth. But honestly? I think double-digit growth has more legs than I gave it credit for, thanks to the sustained tailwind from inference demand. Margins are expanding as HPS becomes a larger slice of the CCS pie and CLS keeps dialing in operational efficiencies.

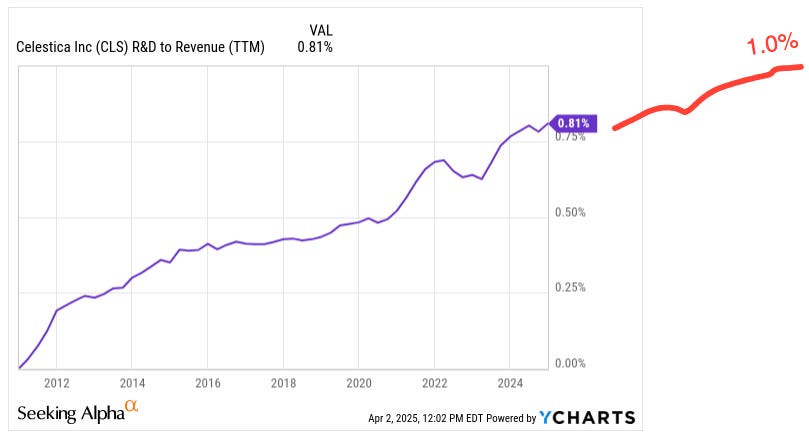

I expect R&D spending to rise, hitting 1% of sales—or about $143 million—by 2028, up from $78 million in 2024. This R&D push is key to keeping CLS’s HPS offering sharp and sticky. More innovation means more defensibility and helps protect the higher-margin business they’ve worked so hard to build.

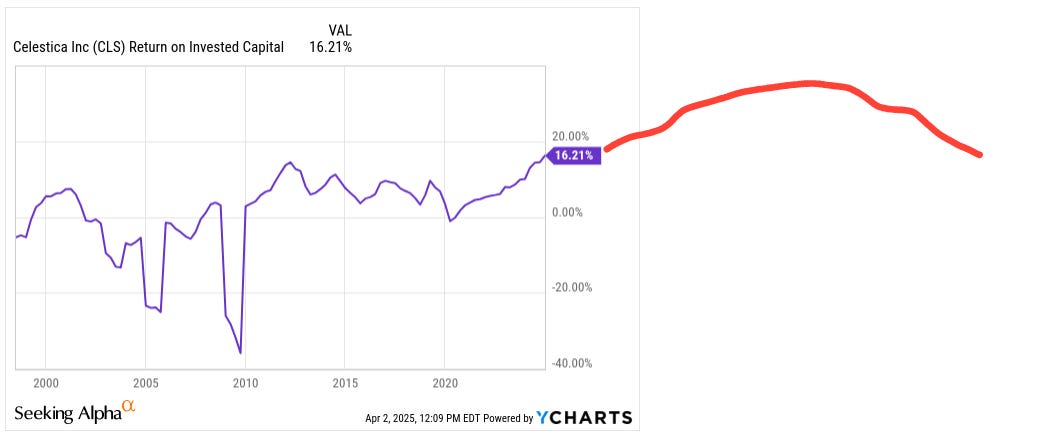

ROIC climbs until 2027, then gradually decreases—but still stays above the cost of capital. In truth, that decline might be milder than I modeled since I took a cautious view on inference. I actually think that the growth leg could run longer than I penciled in.

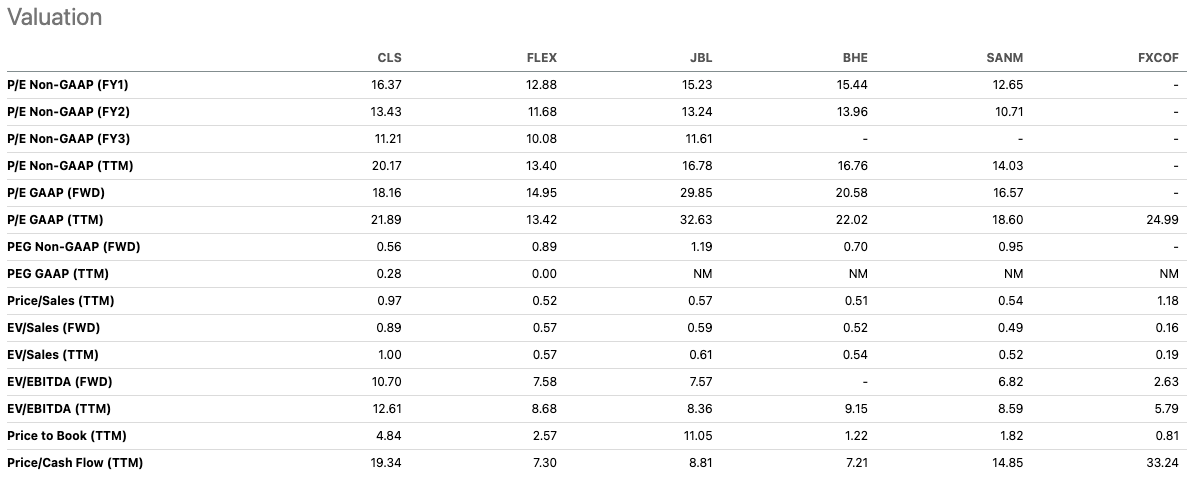

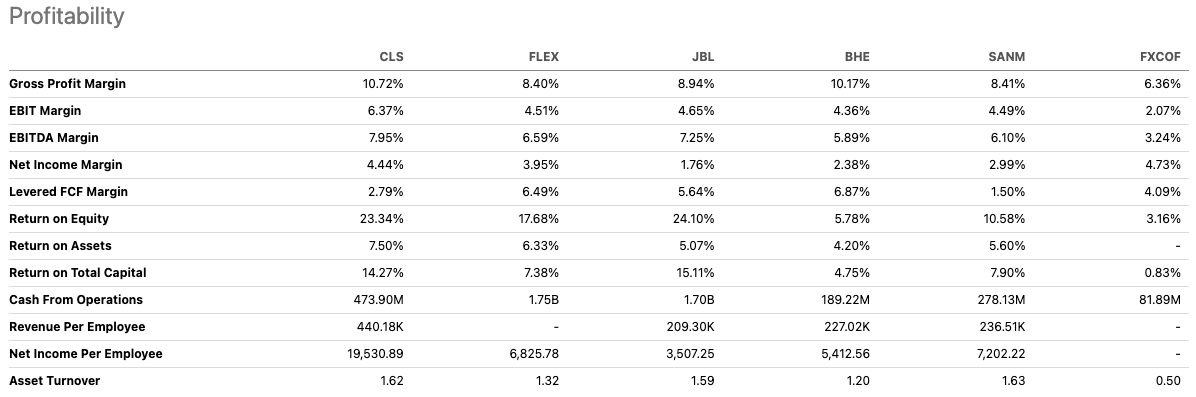

At first glance, CLS might look like it’s trading at a premium—but dig a little deeper, and the story flips.

CLS trades close to its peer group’s average P/E of 22x. But here’s the thing: CLS isn’t just another EMS—it leans more toward an ODM, which usually commands a premium thanks to better margins and stickier relationships. Most of its peers, except for Foxconn (FXCOF), are still stuck in EMS land, with only light ODM capabilities. So, frankly, CLS should be trading at a premium to them, not at parity.

Now, look at PEG ratios (P/E adjusted for growth): CLS comes in at 0.56—a discount even versus EMS peers, who sit at 0.94 on average. So you're getting more growth for every multiple point you're paying.

Sure, CLS trades at a premium EV/EBITDA versus Foxconn (FXCOF), but it earns that spot. CLS beats its peers across the board—on both growth and margin metrics. You're not overpaying for fluff; you're paying for a business with real operational leverage and upside.

Growing faster…

… with better margins.

On the downside, if sentiment sours and CLS trades back to 8x EBITDA—close to the EMS peer average—you’re looking at $50 per share, which still puts your risk/reward at around 1:3 from current levels. Not bad for a name this levered to one of the biggest capex stories in tech.

A Quiet FCF Machine: Celestica’s Buyback Power and What Comes Next

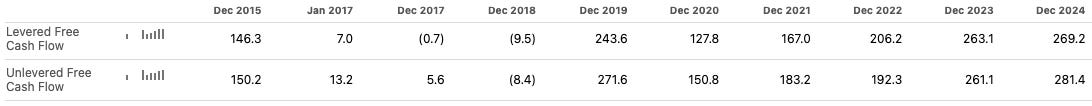

Since 2019, CLS has been a free cash flow machine—not the kind of company that shows “growth” on the income statement but bleeds cash everywhere else. This is cold, hard cash we’re talking about. From 2019 to 2023, CLS generated a cumulative $1.3 billion in free cash flow, with every single year solidly in the black. In 2024 alone, they generated $269 million in FCF.

This trend isn’t slowing down. With margins expanding thanks to HPS, operating leverage kicking in, and capex staying manageable, free cash flow should continue climbing. In my model, I see FCF crossing the $300 million mark this year.

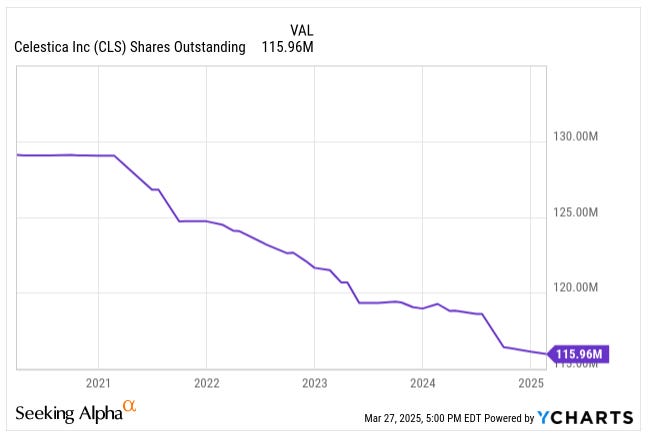

What do they do with all that cash? Well, they’ve already been putting it to work. CLS repurchased $620 million in stock between 2019 and 2024, reducing share count by 10% from 129 million to 116 million shares. The current buyback program still has firepower left, and management has hinted at expanding it.

As CLS de-levers further, I wouldn’t be surprised if a dividend enters the picture, especially as more institutional investors start paying attention. With net debt/EBITDA now below 1x and FCF covering buybacks with room to spare, a dividend plan would make sense.

Celestica’s Three Big Risks

The biggest risks in the CLS story boil down to three things: AI capex uncertainty, customer concentration, and competitive pressure. Let’s unpack them.

Risk #1: AI Infrastructure Spending Slows or Disappoints

The core of the investment thesis hinges on continued aggressive capital investment by hyperscalers in AI infrastructure. The company’s CCS segment—particularly HPS—has seen explosive growth driven by AI data center buildouts. As previously mentioned, revenue from hyperscaler programs makes up more than 70% of CCS revenue and a growing share of total company sales.

However, there is a risk that this surge in AI-related capex could slow. Tech giants are currently in what can be described as a “dollar auction” for AI dominance—building now in anticipation of future monetization. But these investments are being made with long time horizons and unclear near-term returns. Microsoft CFO Amy Hood noted that their AI infrastructure spend is expected to pay off “over the next 15 years and beyond,” underscoring just how speculative some of this spending may be in the short term.

Should AI monetization disappoint or macroeconomic conditions tighten, hyperscalers could rationalize spending, and CLS’s top-line growth—and arguably its valuation—could take a hit. In Q1 2024 alone, AI infrastructure spending by US hyperscalers grew 97% year-over-year, reaching $47.4 billion, but this rate is unlikely to be sustainable indefinitely.

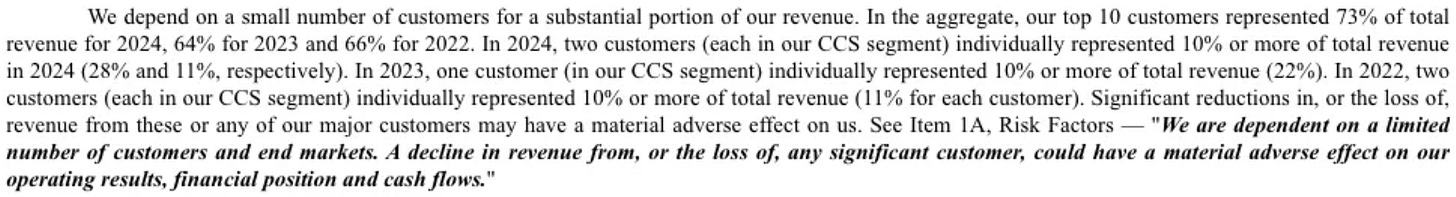

Risk #2: Customer Concentration Risk

As CLS’s AI business scales, so too does its exposure to a very tight circle of mega customers—mostly hyperscalers. These companies now drive a huge chunk of CLS’s growth, especially in the HPS business, where programs are often single-sourced and deeply embedded. In H1 2024, revenue from hyperscalers grew 93% YoY and now represents the majority of the CCS segment’s strength—great for leverage but also a flashing yellow light when it comes to dependency.

And the numbers bear that out. As of 2024, CLS’s top 10 customers represented 73% of total revenue, up from 64% in 2023 and 66% in 2022. In fact, in 2024 alone, two customers in the CCS segment individually accounted for 28% and 11% of total revenue, respectively. That’s nearly 40% of the company’s entire top line in the hands of just two clients. It’s not new—CLS had similar levels of concentration in 2022 and 2023—but the scale of exposure is now even more tied to the AI infrastructure cycle.

This makes for a dangerous setup if anything goes sideways. Whether it’s a slowdown in AI capex, a strategic supplier switch, or CLS simply losing a bid to an ODM rival, the impact could be sharp and immediate.

Risk #3: Competitive Pressure from ODMs and Larger EMS Peers

CLS has carved out a niche by straddling the line between traditional EMS providers and ODMs. Its HPS business provides services few EMS peers can match at scale. However, this moat may be narrowing.

Firms like Wiwynn, Quanta, and Inventec, long-time ODMs with close ties to cloud providers, are aggressively targeting the AI server space. Wiwynn has been proactive in expanding its manufacturing footprint. They've set up a new facility in Johor, Malaysia, focusing on server cabinet assembly with advanced liquid cooling—crucial for handling the intense workloads of AI applications. Additionally, they've showcased AI servers featuring NVIDIA's GB200 NVL72 platform.

Quanta isn't lagging either. They've unveiled plans to establish three new factories in California, aiming to create state-of-the-art assembly lines specifically for AI servers. This strategic move places them closer to key clients like Meta, Microsoft, and AWS, ensuring faster delivery and tighter collaboration.

Inventec is also making strategic moves to bolster its position in the AI server market. Reports suggest that Inventec is considering acquiring manufacturing facilities to enhance its production capacity and establish direct contact with end customers. This potential expansion could enable Inventec to better meet the growing demand for AI servers and strengthen its relationships with major clients.

Simultaneously, large EMS competitors such as Flex and Jabil are investing in higher-margin, customized compute infrastructure to win shares from hyperscaler customers.

Flex has introduced liquid-cooled server and rack solutions tailored for AI and high-performance computing workloads. Their collaboration with JetCool has resulted in rack-level solutions that efficiently manage the heat generated by powerful AI servers.

Jabil is expanding its server portfolio with high-performance servers powered by AMD and Intel processors. These servers are designed for scalability across various applications, including AI and high-performance computing. Furthermore, Jabil's investment in silicon photonics aims to enhance data transmission speeds, a critical factor in AI data centers.

While CLS enjoys a strong incumbency position with some hyperscalers, the barriers to entry are not insurmountable, especially as hyperscalers diversify their supply chains for geopolitical or cost reasons. Any misstep in execution or pricing pressure from competitors could erode margins in HPS and diminish CLS’s strategic value-add.

Liberation Day Tariffs: Headwind or Hidden Catalyst for Celestica?

As I was wrapping up this deep dive, news of Liberation Day broke—and it didn’t take long for the market to react. CLS’s stock dropped 9% in after-hours trading on April 2, as investors digested the implications of the new tariffs. The message was clear: the market sees this as a net negative for CLS. And honestly, I agree.

Because CLS operates across a global supply chain, tariffs on imported components—especially from Asia, where over 70% of its supply base is concentrated—will likely increase input costs. These costs may not be catastrophic, but they could put pressure on margins in the short term, especially if volatility causes delays or sourcing headaches.

Still, the optimist in me sees a silver lining. If these tariffs trigger a push toward reshoring or nearshoring, CLS could be one of the biggest beneficiaries. With its existing North American footprint, CLS is well-positioned to win new business from customers looking to localize production and sidestep tariff exposure. This fits with the growing trend of companies wanting more control and resiliency in their supply chains—a trend CLS has explicitly cited as a driver of growth in its ATS segment.

More importantly, CLS’s HPS business isn’t generic widget-building—it’s highly engineered, often single-sourced infrastructure. That gives CLS pricing power, which makes it more likely they can pass on higher input costs to customers rather than eat them.

In short, yes, Liberation Day brings turbulence, and CLS won’t be immune. However, its strategic position, North American operations, and deep integration into customer programs mean it’s more likely to adapt than get steamrolled.

Conclusion

Honestly, the whole CLS bull case felt easier to defend back in that ballgame booth. It was just me and a bunch of hedge fund bros who hadn’t read a 10-K since the iPhone launched. But now? CLS has done everything you’d want from a company in this position: perfect execution, a focused pivot into high-margin AI infrastructure, and steady, disciplined capital allocation. They’ve earned the re-rating.

If there’s one move that raised eyebrows, it’s the heavy insider selling this year—$137 million worth. It could be tax planning, could be diversification, or maybe some execs just needed to buy a boat. Either way, it hasn’t changed what the business is doing quarter after quarter.

So the question isn’t whether CLS is better than it used to be. It’s whether the market’s ready to price it like it.

Besides stocks, I invest in small businesses. If you are interested or want more details, email me at george@beatingthetide.com.

I launched the newsletter on Patreon in October 2024, but in January 2025, I migrated to Substack.